Navigate a Dynamic Obstacle Course

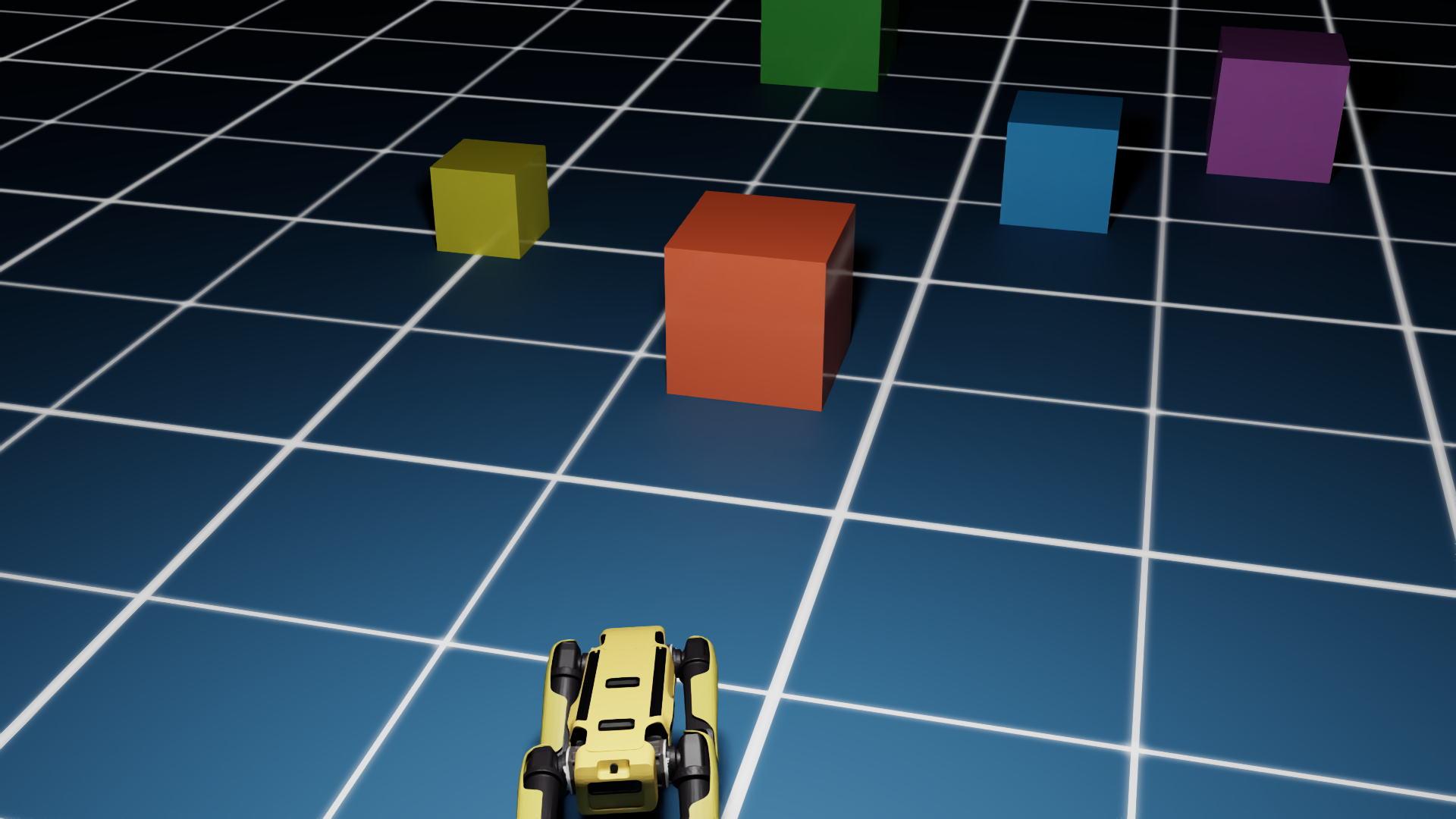

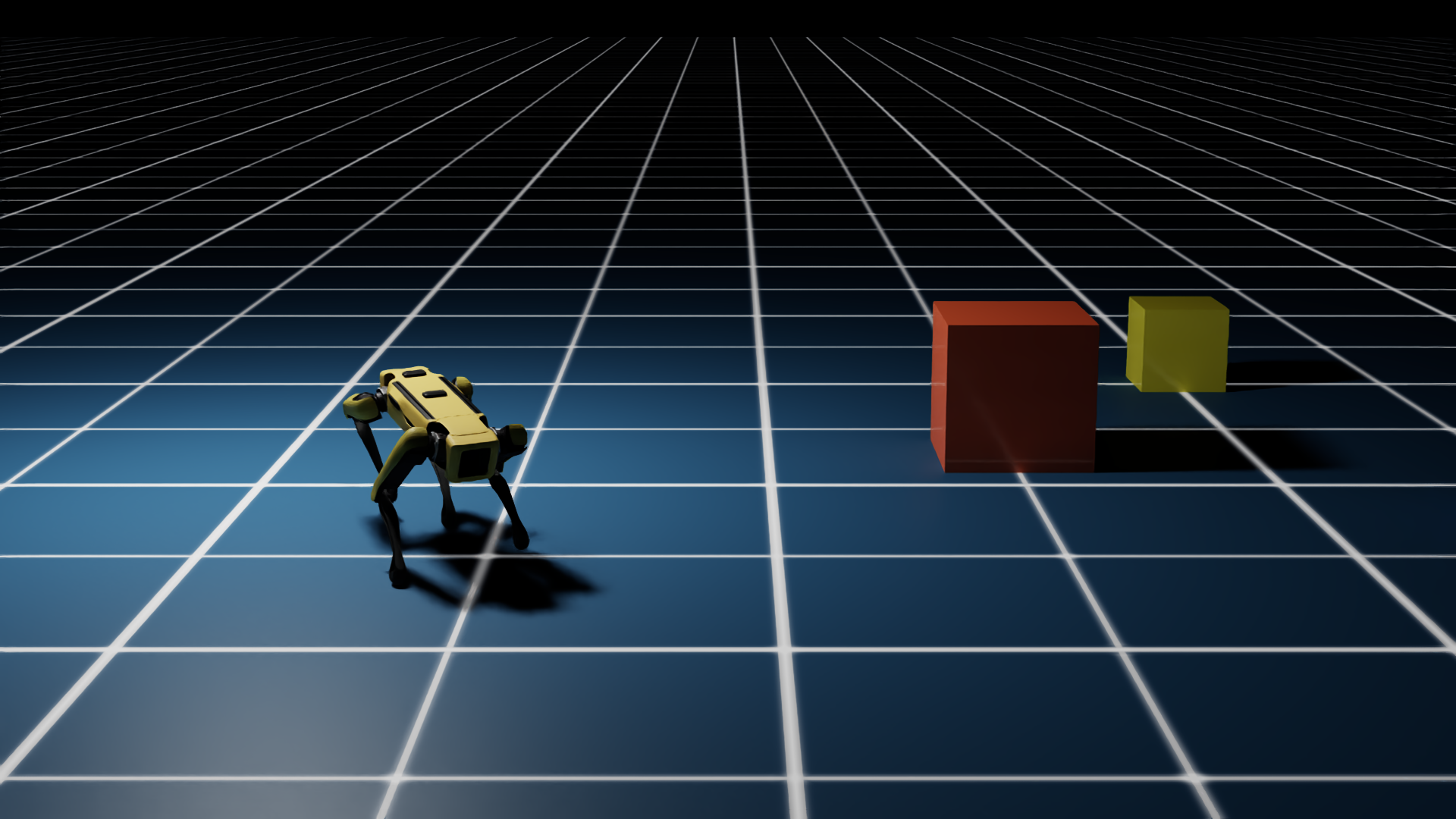

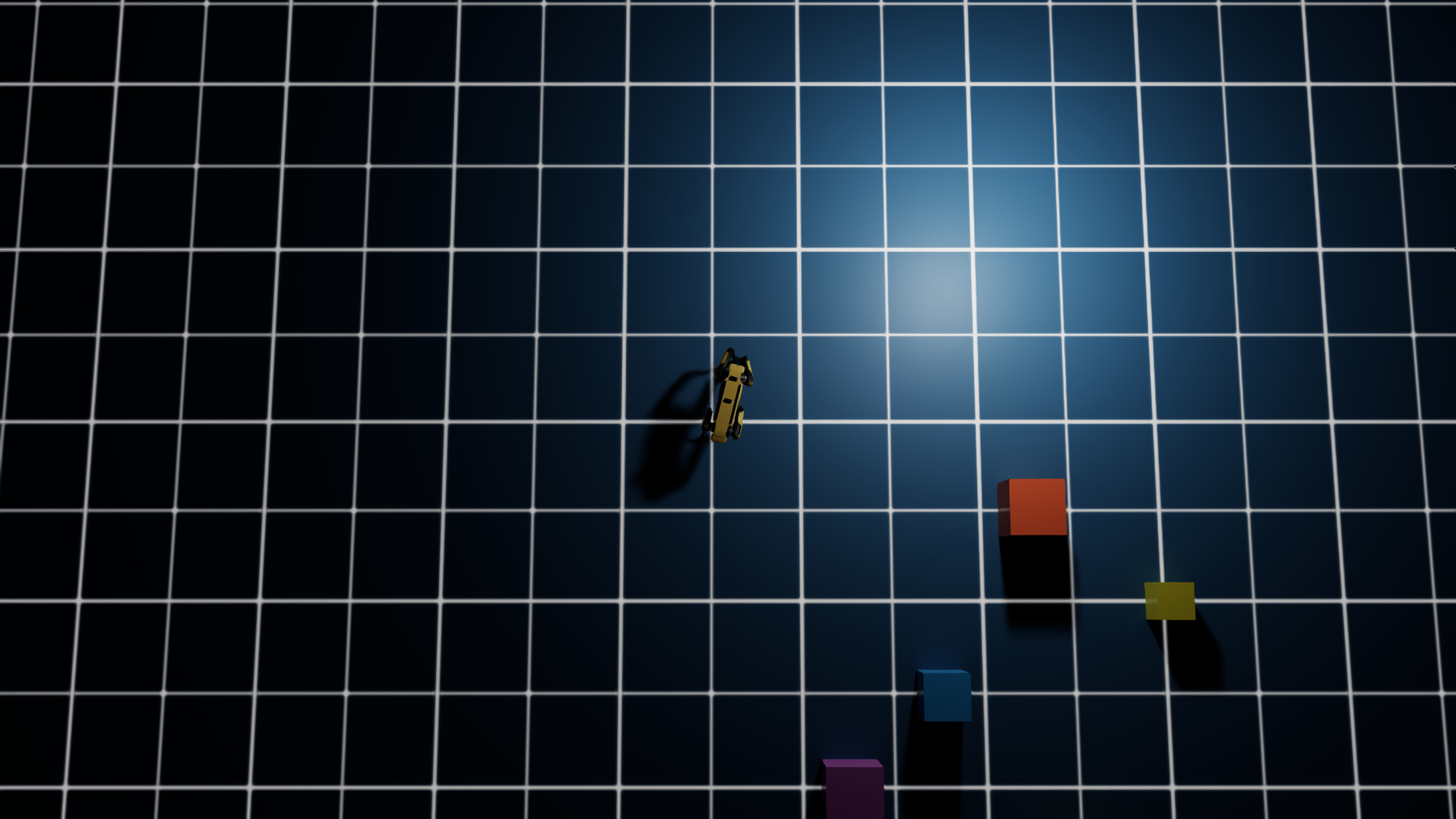

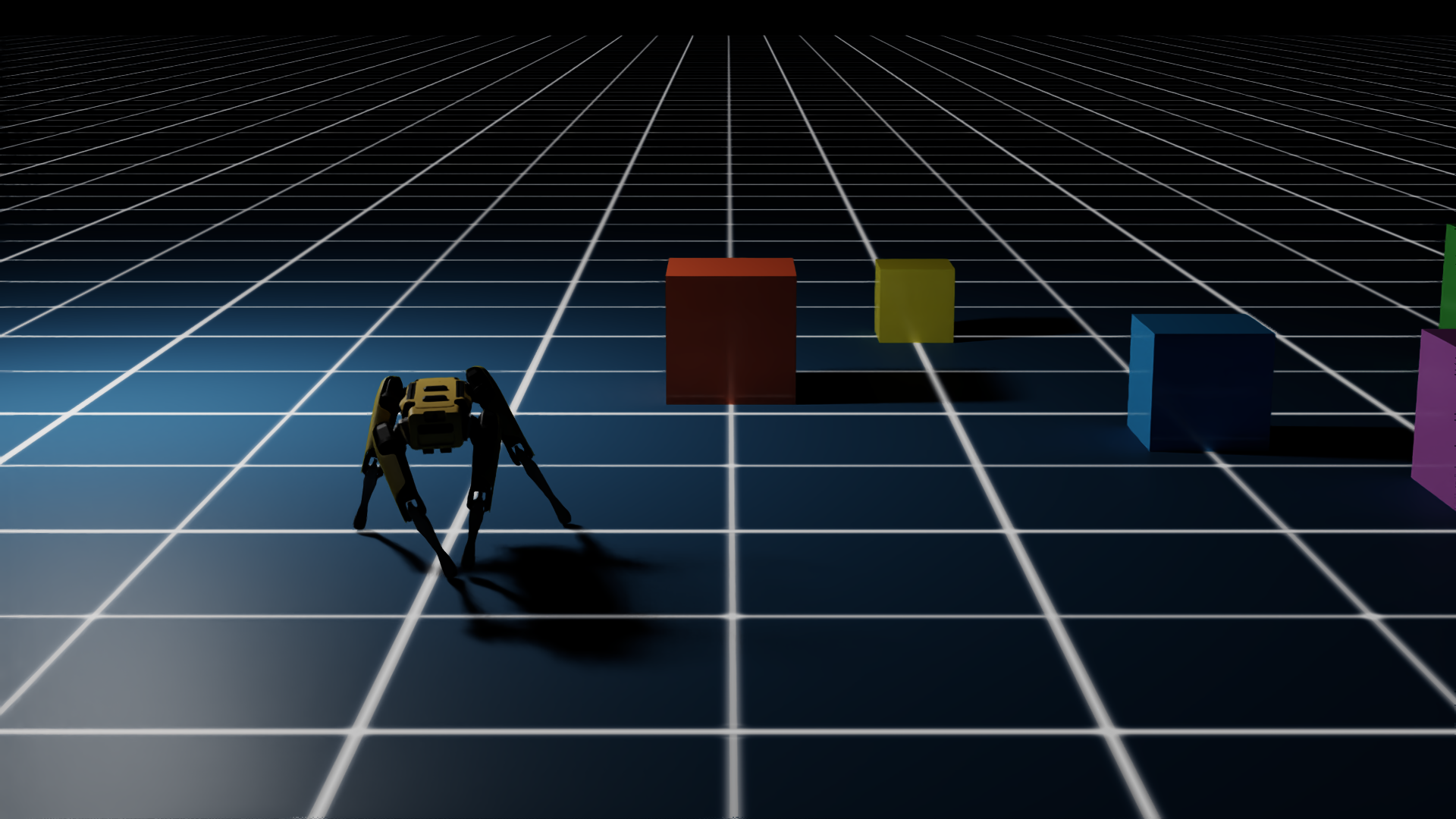

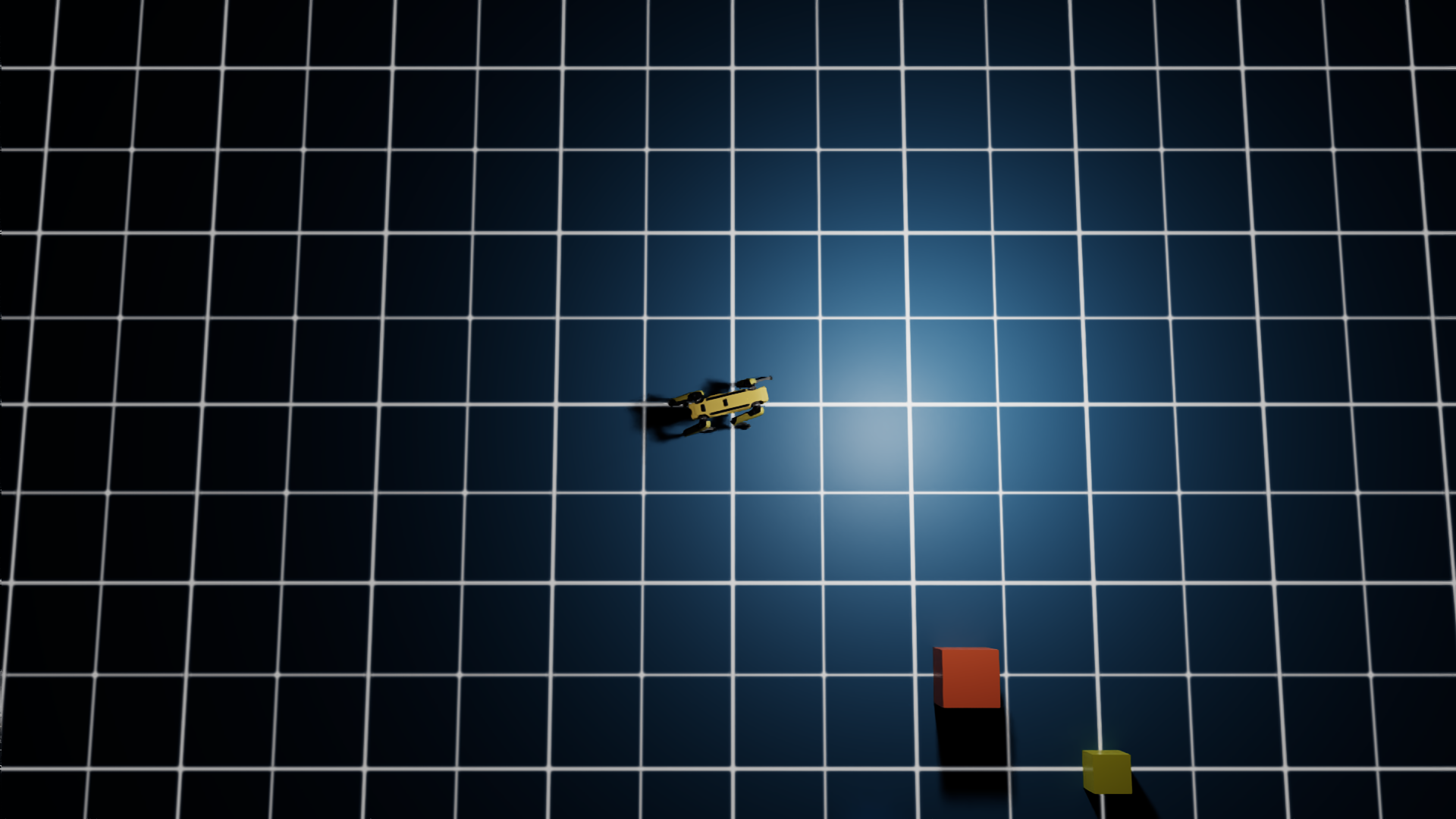

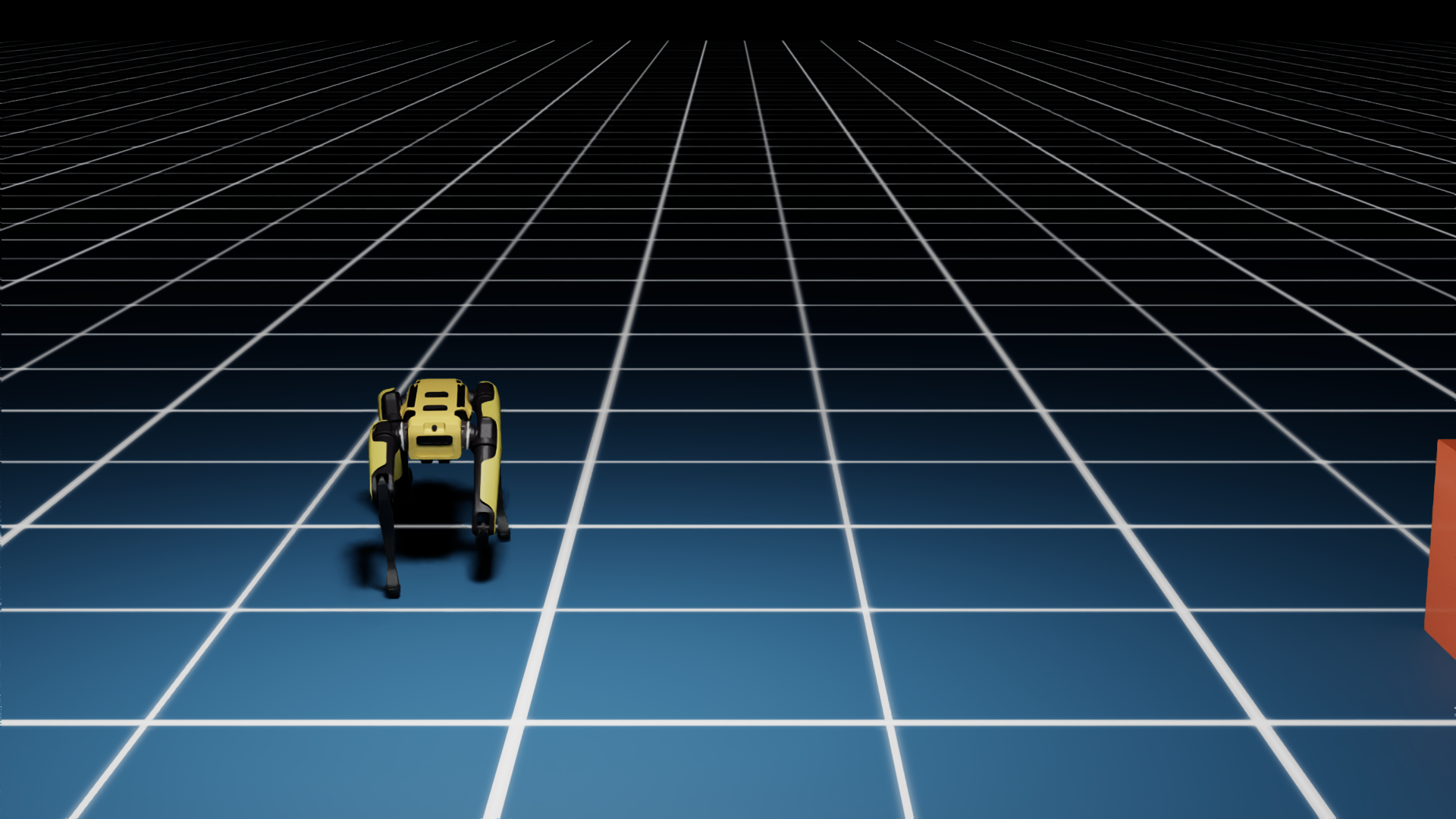

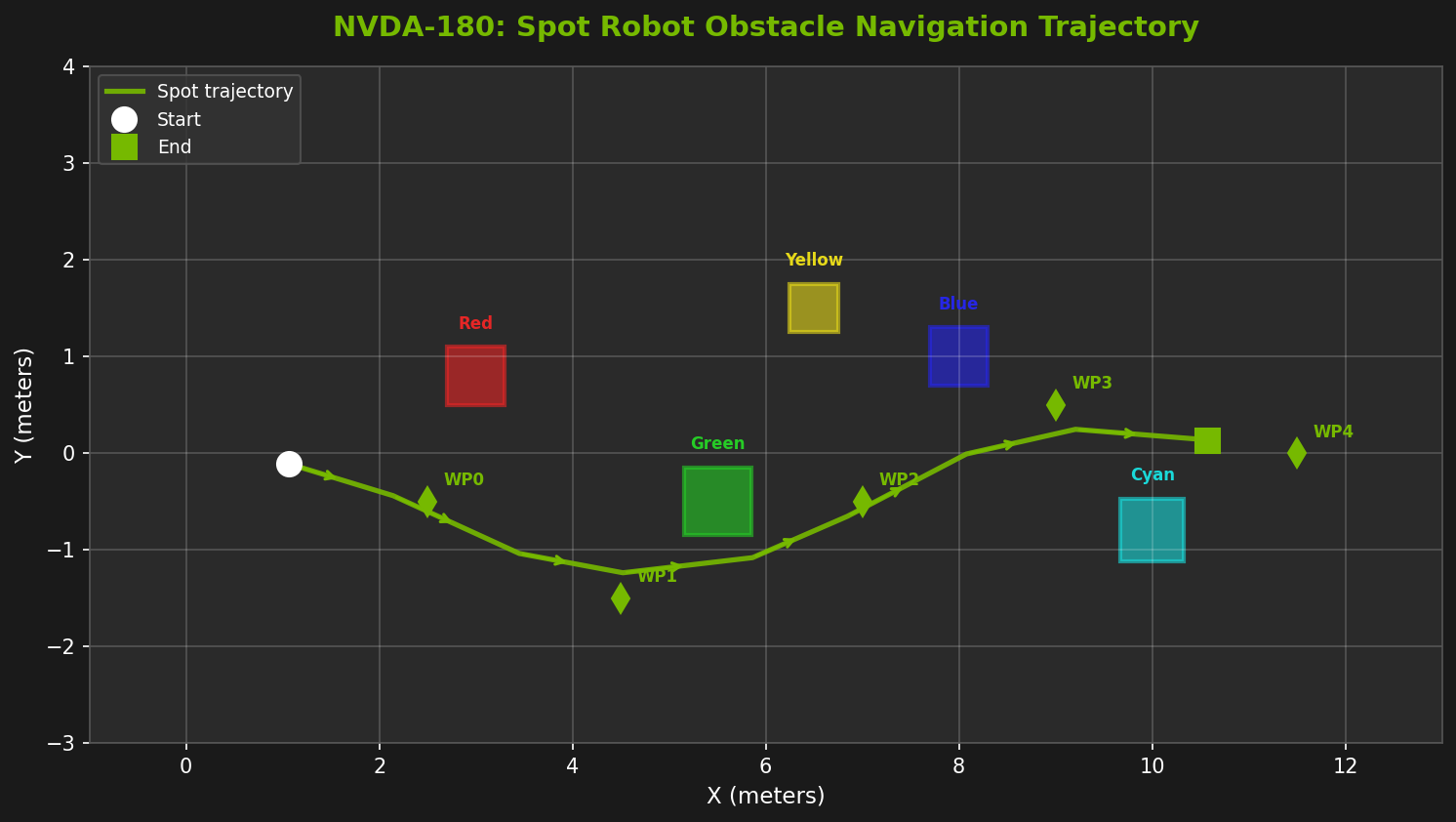

Deploy Boston Dynamics' Spot robot in a simulated environment with 5 colored obstacles arranged across a 12-meter grid. The robot must autonomously walk through the course using an RL-trained locomotion policy, while 3 cameras capture every step.

- 12-DOF quadruped with 48-dimensional observation space

- TorchScript JIT policy running at 200 Hz physics rate

- 5 waypoints with proportional heading control

- 3 synchronized camera angles: tracking, side, bird's-eye

- 300 simulation steps producing 45 RTX-rendered screenshots